Science is not an ideology, religion or set of facts. Science is a process for trying to determine a more accurate Truth of what is Real. Even though science is a good process, it is a process that is done by people, so we do have to contend with the flawed human factor. While there are problems with how we implement the scientific process, it is still the best method we humans have developed for understanding the difference between an idea that is false and an idea that is accurate.

Truth, Science and People

Let us talk about truth for a moment.

Objective Reality is the Real universe with a big R, where we cannot truly know what is there because the scope of the universe is just to complex and beyond our real understanding. We can approximate what the universe is, and via the Scientific Process, we can move our model of the universe closer to Real. So Real with a capital is the objective actual universe, while real with a small letter is what we think is real. I will also talk about Truth versus truth, where Truth is Objective Reality True and truth is what we understand to be as close as we can get, which allows for us to learn new more accurate truths later.

If we call Objective Reality the Truth, with a big T, and recall that we can’t truly understand Objective Reality because it is beyond our human comprehension, then we can’t truly know Truth. Our goal, however, is to get as close to Truth as we can – that is, we can approach it, but never truly get there. Anyone who claims they have Truth knowledge is lying. We want experts to tell us the truth, with a small t, which is the closest to Truth they have got. The expert should be able to tell us why they think it is close to Truth, but also why it isn’t the Truth.

How do we find our close to Truth truth? This is the heart of the scientific process. Science is not Truth, it is a process for moving our understanding towards Truth. Essentially it works like this.

- We have an idea about a thing. All ideas that are useful are effectively helping us make predictions. For example:

- Is this thing an apple? If yes, it can be eaten and have certain properties. It it isn’t an apple, it may or may not be edible, check to see if it is in another “edible” category, or in an “inedible” category.

- There is a loud sound on the other side of those trees, which might be a large dangerous animal. If it is dangerous, I should do something to improve safety. If it isn’t, that action is a waste of energy.

- The idea will have rules, and those rules will be used to define what is in that set of rules to be the idea, or out and thus excluded. Those rules give us a prediction of the future. Based on that hypothesis, you predict the next part of the pattern. If what happens next matches your prediction, your hypothesis is an accurate enough model to use. If it doesn’t match, then your hypothesis is wrong, create a new one, or modify the one you have to be more accurate.

That, right there, is the basic idea of science. Trying to get more accurate ideas about the world by testing those ideas through what those ideas predict.

The takeaway message here is, the scientific process helps us to work out better ideas, hypotheses and models. That moves our truth closer to Truth. With these better models and predictions, which can make our lives better. We have got so good at these ideas, tests and predictions, that we can make the device that you are reading this on – a device made through science.ve got so good at these ideas, tests and predictions, that we can make the device that you are reading this on – a device made through science.

Hypotheses, Laws and Theories

You can have an idea about how something might work, but without evidence and a proposed mechanism, all it can ever be is an idea.

A hypothesis is an idea that has both some evidence supporting it to be useful, and a proposed mechanism for how the idea works. Generally, a hypothesis is narrow in scope.

A Scientific Law or Scientific Theory is a broad, well-substantiated, and heavily tested explanation for a set of phenomena, supported by a vast body of evidence. That is, what a hypothesis (or group of hypotheses) becomes when it grows up and survives being thoroughly explored and tested.

Up until the 20th Century, the ideas (hypotheses plural, hypothesis singular) that scientists came up with to explain the universe, the fit the evidence, were considered to be a verbatim description of how the universe worked. They called them Laws, because that is how it is. Immutable, fixed and works everywhere.

Examples of Scientific Laws:

- Newton’s Laws of Motion,

- Newton’s Law of Gravity,

- Law of the Conservation of Energy

- Faraday’s Laws of Induction

- Moore’s Law

In the 20th Century, these Laws were found to be not quite right and needed to be adjusted. It turns out that the Laws weren’t quite so fixed. As a humble recognition that these rules and equations may change, scientists stopped calling them Laws. For example, Albert Einstein updated the Laws of Gravity, because Newton’s Laws of Gravity worked fine from Venus to the edge of the solar system, but didn’t work very well as close to the sun as Mercury. Newton’s update seemed to work properly in high density gravity fields and at high speeds (the speed of light).

Instead of calling the rules of the universe Laws, the scientists more humbly called these rules Theories.

Examples of Scientific Theories:

- Einstein’s Theory of Special Relativity

- Einstein’s General Theory of Relativity

- Quantum Field Theory

- Darwin’s Theory of Evolution

- The Big Bang Theory

That isn’t to say that Newton’s Laws are wrong. They worked fine to send humans to the moon and back from 1969 to 1972. Einstein extended Newton’s Laws with a new paradigm. When you use Einstein’s Theories and plug in low speed and gravity, they become Newton’s Laws of Motion and Gravity.

There is no real difference between a Scientific Law and a Scientific Theory, except that if the idea was crafted and shown to be accurate after 1900, it is probably going to be called a Theory, and if it was pre-1900, it is probably called a Law. To achieve this level of recognition, the idea is both accurate and robust – meaning that it works well to predict what will happen, and it has been thoroughly tested and the plethora of evidence from multiple angles points to the same positive proof / concept / explanation.

Essentially, all Models are Wrong, but…

9Remember what I said about Object Reality is Real but beyond our understanding? All of our science and all of our personal understanding of the world are mere models (Law / Theory / hypothesis) of Objective Reality. Technically, they are all wrong, as they only model what is complex, but in a way that we can comprehend and hopefully use. So long as they model is useful, we can use it, but we need to keep in mind that it is not actually Objective Reality. That is, Laws, Theories and Hypotheses represent a useable model of reality, but are not Reality.

“Essentially, all models are wrong, but some are useful”, George E.P. Box, Empirical Model-Building and Response Surfaces

George Box, a British statistician, working on quality control, time-series analysis, design of experiments, and Bayesian inference. Box’s helpfully points out that what we want is a useful model, even though we know it isn’t 100% correct.

That is, don’t settle for models that are useless, and don’t be fooled by models that have yet to be fairly tested.

Isaac Newton created a model of physics back in the 1600’s that we still mostly use to describe how physical objects move. Albert Einstein created a more specifically accurate model with his General Relativity. We often don’t need Einstein’s physics for everyday calculations, because Newton’s is good enough for most things, even though Einstein’s is a bit better.

All of our models have limits of accuracy. So long as the model is useful in getting us mostly the right answers most of the time, it is good enough. Don’t discard the model just because it isn’t perfect, but do replace bad models with better ones.

“Not-Truth”, “Alternative Facts” and Pseudo Science

We have got really good at figuring out what isn’t true. In the early days of the scientific process, we had many hypothesis that seemed sound until we tested them and found that either the idea had no predictive power, or was wrong. For example, phlogiston is an idea that there was a chemical or element inherent in flammable things that enabled them to burn. We now know this idea is wrong, that things burn by combining with oxygen (generally not in the thing, but in the atmosphere), to make new chemicals. We knew things burned, a hypothesis for how that happened was proposed, it was shown to be wrong. In searching for what worked, we replaced the hypothesis with a different mechanism, and testing this showed it was accurate.

While the scientific process is pretty good, science is done by humans, who make errors and mistakes. This can lead to scientists having a pet theory that they refuse to let go of even when the evidence says it is wrong, or for movements within science taking a while to be corrected. We know of so many times that scientists have made mistakes because the scientific process works – without science correcting those mistakes, we’d still think they were true. The ideas were tested and found to be faulty, which increased our knowledge. Science is constantly checking itself with newer technology and greater understanding and fixing its own past errors, constantly moving human knowledge towards Truth. Some may see that as an indication that we can’t trust science because it makes errors. To err is human, to fix it takes a process, and we call that the scientific process, or science for short.

When an idea has been tested for a while in multiple ways, and found to not work, then you can trust that it is fiction. Acupuncture is a good example of this. Acupuncture is said to be an ancient Chinese medicine, dating back thousands of years, where piercing the skin in key locations will change the flow of ki energy in humans, which can either harm or heal a person.

While what we recognise to be acupuncture now is fairly new, as the technology to make thin needles is only a few hundred years old, that doesn’t automatically mean that what we call acupuncture in this day and age doesn’t work. To determine if a model works, you need to test it. Many scientific examinations of this have been performed. A few of these tests have shown that acupuncture works better than chance and better than the placebo effect. Acupuncturists state, therefore, that acupuncture is evidence based.

The problem with this claim of evidence based, is that most of the evidence says it doesn’t work. For a start, many leading practitioners disagree with each other about where these acupuncture points are, yet state that there version consistently works and is the only model that is true. If where you insert the needle doesn’t matter, then it isn’t acupuncture, it is just stabbing you. If it does matter, then how can there be many systems of “real”? When setting up a trial for acupuncture, trying to compare “real” versus “sham” puncturing is impossible when there is no agreed upon definition for “real”. Even so, scientists have tested most of the leading versions of “real” and found that they consistently give the same result as the sham, which is often no greater than the placebo effect (where you think you are better because you got treated, regardless of whether the treatment was real or not). [Read more: Pseudoscience, Acupuncture is Theatrical Placebo, Acupuncture on Science Based Medicine, Acupuncture: A History)]

If there is a great deal of uncertainty in the world’s view of what that thing is, then look to the experts. Often there will be a strong “we mostly think it is ‘This'” with some small groups saying “it could also be That”. It is rare for the experts to have a fairly equal division (50/50) on what the experts think is correct. A recent example of that was with SARS-CoV-2, which causes COVID in humans. During the first 6 months, there was a great deal of uncertainty about what this novel (new) virus did to humans and what was the best way to medically treat it, and socially combat it. Around 6 months later, strong groups of effective methodology had been found and relayed to the population of Earth. There were also isolated pockets of fringe claims that were clearly false, like ivermectin. By the end of a year, most of the world experts agreed on the majority of the management plan with some minor differences in some areas. Despite this consensus agreement born of masses of data and evidence, and despite the hundreds of millions who were saved, most of the world’s people surveyed are not confident that the scientific consensus is accurate, which only shows that people are easily confused by media chasing click bait headlines.

The ivermectin claim is a good example of pseudoscience. On scant evidence, the medication ivermectin, which is excellent for certain conditions, was supposed to significantly help in the treatment of Covid. Since we didn’t know what would or wouldn’t work, and a plausible mechanism for how ivermectin could work for this condition was offered, medical scientists worked quickly to test this hypothesis. Experiments fairly quickly demonstrated that ivermectin didn’t help in any reliable or consistent way, so it was dismissed. Fringe groups continued to try to push for this treatment, despite the massive lack of evidence that it worked (and the lack of probable fraud on the ones that said it did). Oddly, the people who most promoted ivermectin as a treatment stood to gain financially by selling it. As Dr Kyle Sheldrick states, they did not find “a single trial” claiming to show that ivermectin was an effective preventative or treatment, without “either obvious signs of fabrication or errors so critical they invalidate the study” [Ivermectin: How false science created a Covid ‘miracle’ drug]. Effectively, the claim was extraordinary, the evidence to support it was suspect rather than extraordinary, so the claim was discredited and abandoned.

Pseudo Science is the term given to people who claim that their method works because it was scientifically proven. They will either cherry pick the experiments that gave them a positive outcome and dismiss the ones that didn’t, or they will create poor quality studies that bias the results, like putting your thumb on the scale, invalidating the result. They will often throw random science words, or science sounding words, into their discourse like “quantum”, or “biofield”, to confuse people who don’t understand the scientific process.

Inherent Problems in the Scientific Process

While the Scientific Process is the best way we currently have to become more accurate in what we know and what we can do, there are some major problems with this process. This comes in two major flavours – human weakness and the limits of flawed systems.

Science is done by people. People need to earn money so that they can live. Money is paid to scientists who get results, which is measured by publishing or the creation of products. To publish means to submit your experimental results or theoretical frameworks in reputable journals, who may reject your paper because it is faulty, repetitive, or not interesting enough.

For scientists, it is often publish or die (career death).

To get your paper published often means ensuring that your paper is not just repeating what someone else has done and getting the same result, because that is considered boring and won’t help sell the publication. Yet the scientific process relies on a measured outcome being shown to be reliable through repetition. This has led to faulty papers not being properly tested because if your test shows the same results, you don’t get published, which risks your career.

Some humans will accidentally misrepresent their data via accidental P hacking. P is a symbol used to represent the probability that your experimental result was not just chance. Having a good P number (small) doesn’t mean that your experiment was right, just that it is unlikely to be a chance result (not random). That is, an experiment that has an interesting result, but could just be random, is not informative. An experiment that is unlikely to be a random result may be informative, but has yet to be shown to be likely accurate due to lack of other repeats agreeing with this result, is more likely to get published. Changing how we do the experiment can change the P value, accidentally making the result seam more plausible than it really was, and thus wasting our time thinking we have an interesting result when what we have is a circumstance that biases what we think is random versus not random.

An excellent source for learning about P Hacking is by Science Based Medicine

For example, let us say I have two teams competing in a sports ball competition. I can predict that my team is better, and if I stop the game as soon as my team is beating your team, then I will win that prediction. Clearly my team was shown, in this experiment, to be better than yours. Except that the chances of my team beating your team at some point is close to 100%, unless there is a huge disparity in the skills between the teams. That isn’t a good result, that is a bias of statistics. On the other hand, if we both agreed at the start of the experiments that an hour is long enough for the better skilled team to make themselves known through the score, then whoever has the higher score at the end of that hour is the superior team. I don’t get to change the time of the competition to suit my goal – we see what the teams can do.

There are many ways that scientists may accidentally change a criteria which invalidates the results.

There are also many ways that scientists may intentionally bias the results, or rather commit fraud, so that they can get paid, or praise, or further an ideological agenda. After all, scientists are human, and humans can have human weaknesses.

A classic example of this is when Andrew Wakefield published in The Lancet 1998 a fraudulent paper, now retracted, claiming that vaccines caused Autism. Wakefield’s results were poor at best, and investigation showed that they were fraudulent. The general consensus of scientists in the field at the time were that Wakefield was wrong, and within 1 year, Wakefield’s claims had been fully checked, tested and found to be false. Unfortunately, many people believed Wakefield’s fearful conclusion which has led to many people dying from preventable diseases. Wakefield is very responsible for those deaths, and the continued deaths and illness caused by people catching preventable diseases from refusing other vaccinations.

While science was able to quickly correct the erroneous results created by Wakefield, the public zeitgeist errors and life harm are still being felt by millions of people from this fraud.

How to Use Scientific Knowledge Properly

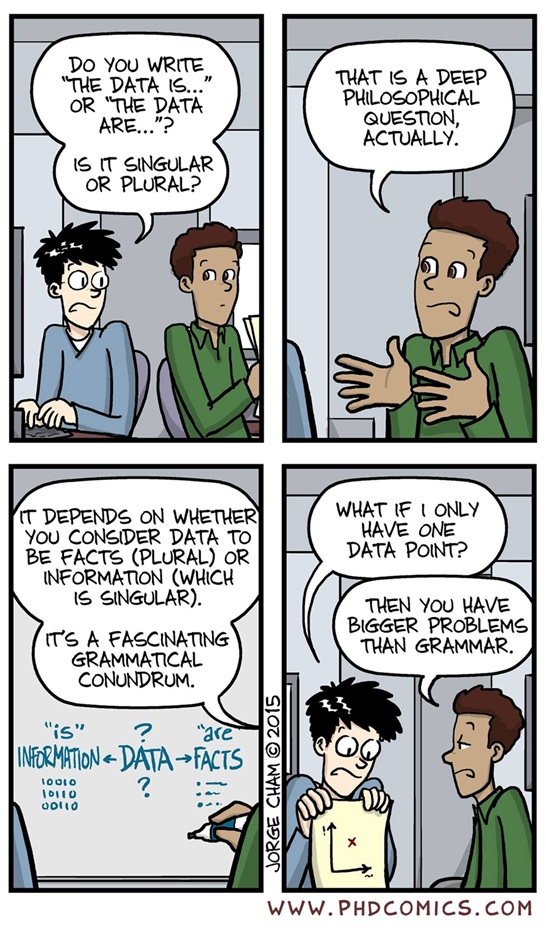

Evidence and Data

Not all evidence is created equal. In fact, evidence exists on a spectrum of reliability. My friend’s mother’s cousin’s friend’s observation is considered hearsay, and while it may be interesting, it’s quality of evidence is very low. What I see directly with my senses is far more reliable than hearsay, but since we are merely human and thus, easily fooled, my own eye witness is low quality. Careful measurements in a controlled study is quality evidence. A consensus of results from multiple independent double blind or otherwise appropriately crafted studies with clearly pre-stated goals, method and statistical analysis methods is good quality.

An opinion is not data, it is either you stating your bias, or you stating your personal subjective preferences. For example, I quite like pineapple on my pizza, while I am aware that there are many people who do not like pineapple on their pizza. This is an example of a personal subjective preference, not an indication of whether it pizza is objectively better with pineapple on it. An opinion about whether a minority group should have human rights or not is not an opinion, it is hate speech (unless it is about nazi’s or a similar hate group – see the Paradox of Tolerance to understand why). A person’s opinion is not a fact, although it can in some instances be the beginnings of evidence. A single opinion has very poor evidence quality or is irrelevant, while a plethora of opinions can make good social data, but is irrelevant when it comes to physics.

My most common critique on research papers is how much evidence was gathered (sample size), the quality of the evidence gathering (trying to get an idea of how many alcoholics are in a country by sampling people in bars is poor gathering), whether the results match both the conclusion and the abstract (often they mismatch), and if the study is making too many assumptions before doing the study (looks hard at psychology). We discuss this further below in the section Reading a Paper.

Bayesian Statistics, the foundation of the Science Method

Bayesian Statistics uses Bayes’ Theorem to compute and update probabilities. Bayesian Statistics is a theory in the field of statistics based on the Bayesian interpretation of probability, where probability expresses a degree of belief in an event.

History: Thomas Bayes developed Bayesian Theory in the 18th century and it was published posthumously by his estate in 1763, since Bayes didn’t submit it prior to his passing. Incidentally, Pierre-Simon Laplace published a very similar theorem independently in 1774, apparently unaware of Bayes’ Theorem. Laplace went on to develop the Bayesian Interpretation of Probability, a system where probability is interpreted as reasonable expectation. [“Bayes’ Theorem”, Wikipedia, “Bayesian Probability”, Wikipedia, “Bayesian Statistics with Hannah Fry”, Stand Up Math].

The basic idea of this Bayesian Statistics is that regardless of where your current belief in an event is, repeated experiments or data will shift the belief in the event. That is, with more data, you get more information, which allows you to correct how likely the event is.

Or put another way, you come up with a hypothesis (an explanation for how something might work). Unfortunately, you don’t know how likely your hypothesis being correct is. As you gather data related to your hypothesis, you update the probability that your hypothesis works, either positively or negatively depending on how well the data supports the hypothesis; that is you shift the probability (confidence / belief / likelihood) that the hypothesis is correct. Initial data will likely shift that probability quite a bit (unless you just got lucky), and with enough data, you will find that the confidence (belief / probability) of that hypothesis doesn’t change much. At the point that the probability stops moving much, you have a fairly accurate level of confidence in the hypothesis.

You can never be 100% confident that your hypothesis is correct, since the next bit of data might show a flaw in the hypothesis. Nor, according to Bayes’ Theories, can you be 0% confident that the hypothesis is correct (or 100% that it is wrong), since again the next bit of data might support the hypothesis. If we could imagine an infinite series of data that we could check, we can approach 100% or 0%, but due to the nature of infinity, we can’t ever get there.

However, as we approach a particular number of confidence, we can begin to assume that without Extraordinary Evidence (as said before) to the contrary, we have got a good probability of how true (accurate) the hypothesis is.

This, effectively, is science. This is why

- Scientists are reluctant to talk in absolutes of “this is right and that is wrong”, since they are aware that the next evidence might shift the exact position of confidence a bit.

- A few studies that contradict a well researched idea (hypothesis, Law, Theory) do not upturn the applecart and rewrite the science books.

- Science updates its confidence in hypotheses all of the time as new data and research are gathered, and new hypotheses are proposed that might be more accurate that previous hypotheses.

Scientific Consensus

Science has got big and complex. As such, there are many fields of science, and scientists will specialise in a field for most of their career. They will get very good at that particular field and have a passing knowledge of other fields. Based on what the experiments in that field have shown, a general consensus of what Scientific Truth is for that field will be formed – the best truth we have right now that is considered to be the closest to Real Truth.

On average, if you are going to bet on something being right or wrong, if you bet with the Scientific Consensus, you’ll almost always win.

As science progresses, that consensus opinion becomes more refined and closer to Truth, and only rarely does it say “we were very wrong” and do a major course correction.

Often we view media that says “scientist claims that all scientists are wrong about X”.

- The Wrong Expert: Some of the time, the media has a scientists giving an opinion that is outside of their area of expertise. In these cases, ignore that opinion. It isn’t an expert opinion. For example, I’m an expert on mental health, so my expert opinion should be about mental health. If I give you advice about your car, it isn’t an expert opinion. It is a general opinion and shouldn’t be taken too seriously. We often think because this person is a scientist, they are an expert in all of science, and that just isn’t the case at all.

- Untested New Research: It takes time for new research to be properly tested by other scientists to confirm or deny that the new claim is accurate. Consider how often you have seen this claim, only for that claim to disappear, slinking off into the night and hoping that no one paid attention? That is because follow up research showed that there was an error in that paper. Pro tip: wait a few years before investing in the claim.

- Click Bait: Often what the title and general article claim the experiment showed is not at all what the experiment showed. The mainstream article, paper title and paper abstract are rarely written by the authors of the paper. Only around 1/3 of the time are the paper title and abstract in line with the paper.

Scientific Consensus changes slowly, because good science takes time to do properly.

When Scientific Consensus is Wrong

While betting on the consensus of expert scientists is generally the right call will win most bets, Scientific Consensus can be wrong. All that it takes to show that an idea, hypothesis and Theory are wrong is good evidence that shows that it is wrong.

It is rare that such evidence shows that the entire basis for the current scientific knowledge in that field is wrong. Humans have been doing good science in most scientific fields for around 400 years, and excellent science for the last 100 years. In the very early days of good science, it did not take much evidence to topple a long held superstition that was considered to be good science. These days, so much testing in many fields has occurred that it is highly unlikely that scientific understanding on a broad area has been fundamentally wrong.

What does happen is specific subfields of scientific research show that our understanding is incomplete. For example, Isaac Newton published his physics of motion in 1687, and this was tested over and over again without fail for centuries. An anomaly in the timing of the orbits of Jupiter’s inner moons compared to what we expected based on Newton’s physics indicated that something was wrong. This was solved by Albert Einstein when he published his Postulate of Relativity in 1905 (known as General Relativity). This did not show that Newton’s physics was wrong, just limited. Newton’s physics works well when gravity is weak and speed is slow, while Einstein’s physics regardless of mass and speed, but are difficult to use for normal human environments.

Scientific Consensus can be wrong. When Einstein published his relativity, showing that time was not invariant, many people were upset. A letter with 100 scientists signatures was written against Einstein’s paper. Einstein retorted “to defeat relativity one did not need the word of 100 scientists, just one fact.” Or as Richard Feynman pointed out, “It doesn’t matter how beautiful your theory is, it doesn’t matter how smart you are. If it doesn’t agree with experiment, it’s wrong.”

This needs to be remembered in the context of Carl Sagan’s quote: “Extraordinary Claims require Extraordinary Evidence.” For you to show that the Scientific Consensus is wrong, you need to be able to show your evidence for it, and it has to actually work reliably and consistently. Often the claims that try to overturn scientific knowledge and consensus falter under scrutiny because the evidence was fiction or mistaken.

One of the beautiful features of science is that when someone makes a claim, so long as that claim has some semblance of substance, it will be checked and rechecked to see if it is true. When it is found to be false, the claim is shown to be an error or the claimant fraudulent, and if the claim turns out to be true, then scientific knowledge is updated. That is, on average, Science corrects itself towards greater accuracy and Truth most of the time.

What is odd is that the few times that science becomes less accurate, or realises it was wrong and corrects itself is used by grifters to try to undermine the process rather than highlight that this is evidence that the Scientific Process is working.

Reading a Paper

Scientific papers are written by experts for experts. There is quite a bit of assumed knowledge due to that, plus the authors are trying to say as much as they can in some fairly restrictive word limits, so they don’t have time to explain terms.

Tip 1: Use Wikipedia to

- Understand the industry terms

- Get a good middle ground understanding of the topic

A single paper isn’t enough to understand what is going on. Some papers have excellent summary of knowledge at the time of writing in their introduction, which can help you get up to speed, however keep in mind that this may be skewed to the author’s bias.

Tip 2: Read multiple papers, including dissenting opinions to what you have found.

To get a good idea of what is going on, you mostly need to read several papers. If you aren’t familiar with the topic, read a few meta analyses of the question you have.

Know the difference between a test study, which will have a small sample size and a limit to how much follow up they did, and a more extensive series of studies, which will have a collective large sample size and consistent results. Know the difference between a controlled experiment, where confounding variables are well controlled for, and a retrospective analysis, where we are looking only to see if there is a pattern that might connect two or more elements.

3. The CRAAP Test

- Currency: Is the information up to date?

- Relevancy: Does it answer your specific question, or give you usesful information?

- Authority: Is it published in a suitable journal, does the author have good standing in the relevant field of expertise?

- Accuracy: Are the findings supported by evidence, was the evidence adequate to the conclusion?

- Purpose: Is the author informing you have findings rather than persuading you to an opinion?

When you read the paper, you want to consider the sample size, what was included and excluded in the paper, what variables were controlled, if the paper actually tested what they thought they did, and if the conclusions matches the results. You’d be shocked at how often this doesn’t happen, especially in psychology papers. Even a poorly written paper can have some interesting ideas for you to consider and do some research on. Some papers are too faulty to take seriously. A good measure of reliability is the citation count, but watch out for controversial papers being over cited.

- Is the paper / study posted in a recognised, quality journal?

- Yes, then it has likely at least passed peer review.

- No, for example, pay to publish or on their own website only – be very suspicious

- If this is a meta review / meta analysis / systemic review:

- Good signs:

- If it has been released in the last 7 years, then you can likely trust it.

- Excluded papers are due to not meeting the specific criteria questions rather than quality.

- It agrees with other recent meta reviews, or has a good reason why it doesn’t.

- Concerning:

- The ratio of papers rejected for inclusion was over 50% due to issues with quality, yet the meta analysis finds the things plausible.

- The number of included papers is lower than 30, yet the meta analysis is glowing.

- Good signs:

- If this is a stand alone study:

- Study, actively testing groups

- Good signs:

- The sample size above 50 (for social / psychology) in every test group, unless it is clearly stated as a pilot group.

- The abstract, results and conclusion align.

- Minimal assumptions are made to prior to the study (avoiding begging the question logical fallacy).

- The method is published prior to the study being done (this is a new standard, studies older than 2020 aren’t held to this standard).

- Did the study consider that the question might be wrong? (checking both yes this is true, and no this isn’t true).

- Concerning signs:

- Not double blinded (if testing people) without a good explanation for how they excluded participant bias.

- Test group isn’t compared to a placebo.

- The study is performed by those who benefit from a positive outcome.

- Good signs:

- If this a retrospective / mass data review

- Good signs:

- Large sample size.

- Intelligent design avoiding confounding factors.

- Clear signal.

- Concerning signs:

- Number of participants is low.

- Lots of self report.

- No discussion about excluding confounding factors and steps to avoid including or factoring in those.

- Method does not seem to lead to the conclusion.

- Good signs:

- Study, actively testing groups

References

Scientific Theory, Wikipedia, https://en.wikipedia.org/wiki/Scientific_theory

Why Science Doesn’t Make Laws Anymore, 2026, StarTalk, YouTube, https://youtu.be/EVJdwD7coQ4?si=ajsyfHIh_ZbR0mT6

Empirical Model-Building and Response Surfaces, (1987), p. 424, George E.P. Box & Norman R. Draper.

Acupuncture Is Theatrical Placebo, (2013), Colquhoun, D., & Novella, S. P., journal Anesthesia & Analgesia, 116(6), 1360–1363. https://doi.org/10.1213/ane.0b013e31828f2d5e

Acupuncture, (2018), Sciencebasedmedicine.org. https://sciencebasedmedicine.org/reference/acupuncture/

Acupuncture: A History, (2005, February 22), Stephen Basser, M.D., https://quackwatch.org/acupuncture/hx/basser/

Ivermectin: How false science created a Covid “miracle” drug, (2021, October 6), Schraer, R., & Goodman, J., BBC News. https://www.bbc.com/news/health-58170809

The P-hackers Toolkit, (2020, April 30), Sciencebasedmedicine.org, https://sciencebasedmedicine.org/the-p-hackers-toolkit/

Paradox of tolerance, (2019, November 11), Wikipedia Contributors, Wikipedia; Wikimedia Foundation. https://en.wikipedia.org/wiki/Paradox_of_tolerance

Bayes’ theorem, (2019, July 27), Wikipedia; Wikimedia Foundation, https://en.wikipedia.org/wiki/Bayes%27_theorem

Bayesian probability, (2020, April 26), Wikipedia, https://en.wikipedia.org/wiki/Bayesian_probability

Bayesian Statistics with Hannah Fry, (2020), YouTube, https://youtu.be/7GgLSnQ48os?si=F4QDV3oEt1iHOzfw

Piled Higher and Deeper, Jorge Cham, comic 1816, www.phdcomics.com